Updated:

April 2, 2026

Anthropic Source Code Leak Unleashes Massive Chaos

Anthropic accidentally exposed internal source code for Claude Code on March 31, 2026, after a public npm release shipped a debugging source map that let outsiders reconstruct the tool’s readable TypeScript codebase.

Anthropic said the incident came from human error in the release process, not a hack, and said no customer data, credentials, or model weights were exposed, but the leak still spilled more than 512,000 lines of code and offered competitors and security researchers a rare look inside one of the AI industry’s most important coding products.

What Happened in the Breach

Anthropic’s Claude Code release 2.1.88 reached the public npm registry with a source map file attached. That file pointed to a zip archive containing nearly 2,000 files and more than 512,000 lines of source code, according to multiple reports. Security researcher Chaofan Shou drew public attention to the issue, and copies of the code spread across GitHub within hours.

Anthropic’s public line was consistent across outlets. Spokespeople said the incident exposed internal Claude Code source, not customer records, credentials, or the company’s core model weights, and they described it as a packaging or release-process mistake rather than an outside intrusion.

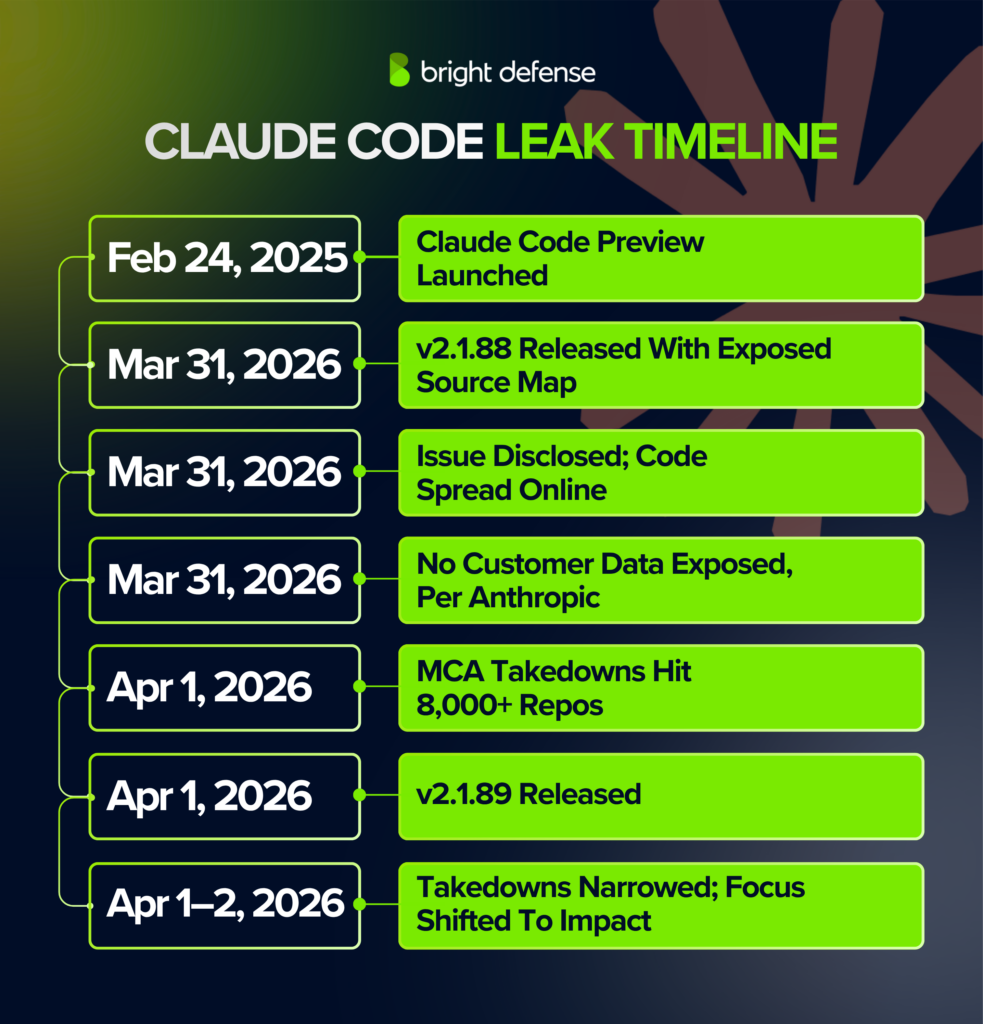

Timeline: From First Access To Latest Update

The chronology is now clear on the major points.

- February 24, 2025: Anthropic introduced Claude Code as a limited research preview alongside Claude 3.7 Sonnet, positioning it as an agentic command-line coding tool for developers.

- March 31, 2026: Anthropic published Claude Code release 2.1.88 to npm, and the package contained a debugging source map that exposed the readable codebase.

- March 31, 2026: Chaofan Shou publicized the issue, and mirrors of the code began spreading across GitHub and social media.

- March 31, 2026: Anthropic told reporters that no customer data or credentials were exposed and that the event was not a security breach.

- April 1, 2026: Anthropic began an aggressive DMCA response on GitHub, and reporting said the initial sweep affected more than 8,000 repositories.

- April 1, 2026: Anthropic released Claude Code v2.1.89, which GitHub lists as the latest public release at 01:07 on April 1.

- April 1 and April 2, 2026: Anthropic said the takedown sweep reached more repositories than intended and narrowed the notice, while public reporting focused on the leak’s competitive and operational fallout rather than customer harm.

What Data Or Systems Were Affected

The exposed material was Claude Code’s internal application source, not the underlying Claude model weights. Reports said the package revealed architecture, prompts, tooling logic, feature flags, internal comments, and references to unlaunched functions, including background-agent and companion-style features.

The company said no sensitive customer data or credentials were involved. Public reporting reviewed for this story did not identify leaked payment information, government identification numbers, passwords, access tokens, or internal employee records tied to end users. The affected asset was intellectual property and operational design, not a consumer data trove.

Who Was Responsible (Confirmed Vs Alleged)

The confirmed account points to Anthropic’s own release workflow. Bloomberg reported that Anthropic chief commercial officer Paul Smith called the incident the result of “process errors” tied to the startup’s fast product release cycle and said it was “absolutely not breaches or hacks.” Anthropic spokespeople gave similar accounts to Axios, The Verge, and Business Insider.

No public evidence has emerged that a hacker broke into Anthropic’s systems to obtain the code. The post-leak mirrors, forks, and reimplementations came after the package reached the public registry. Claims that the leak reflected sabotage, insider malice, or a separate compromise remain unconfirmed in current reporting.

How The Leak Occured

This incident does not fit the classic pattern of a network intrusion. Anthropic appears to have shipped a debugging artifact inside a public software package, and that artifact exposed a path to the full readable codebase. In practical terms, the company handed the internet a map to its own source.

Source maps are meant for development and debugging. When they escape into production, they can reveal the structure and content of code that would otherwise remain obfuscated or bundled. That is why this leak mattered so much: the exposure turned Claude Code from a black box into a detailed blueprint for rivals, researchers, and anyone else willing to inspect it.

Impact and Risks for Customers

The direct risk to Claude users appears limited so far because Anthropic says no customer data, credentials, or model weights were exposed. Public reporting has not described identity theft, fraudulent charges, or mass account compromise tied to this event.

The indirect risk is more serious for enterprise users and for Anthropic itself. The leaked code gave outsiders visibility into guardrails, internal tooling, memory systems, and unlaunched features. Analysts quoted in coverage said that kind of exposure can help bad actors study ways around protective controls and help competitors shorten their own development cycles.

Company Response And Customer Remediation

Anthropic moved on three fronts. It told reporters the release was a human-error packaging issue, it pushed a newer public release, and it launched DMCA takedown requests against GitHub repositories that hosted the leaked code. GitHub’s public release page shows v2.1.89 as the latest version on April 1, 2026, which marks the clearest public technical update after the faulty package.

Customer remediation was limited because Anthropic says the incident did not expose customer information. No public reporting reviewed for this story shows refunds, vouchers, credit monitoring, password resets, or mass customer notifications tied to personal-data exposure. Anthropic instead framed the response around internal process fixes and repository takedowns.

Government, Law Enforcement, And Regulator Actions

As of April 2, 2026, public reporting reviewed for this story had not identified any announced law-enforcement investigation, regulator notice, breach-filing action, or court case tied specifically to the Claude Code leak. Coverage focused on Anthropic’s own explanation, GitHub copyright enforcement, and the business fallout.

That absence makes sense on the facts known so far. The incident looks like an intellectual-property and operational-security failure, not a consumer-data breach of the sort that usually triggers mandatory notifications or credit monitoring. That reading could change if later reporting shows a wider exposure, but no such confirmation has surfaced yet.

Financial, Legal, And Business Impact

The leak hit Anthropic at a sensitive moment. The company announced on February 12, 2026 that it had raised $30 billion at a $380 billion post-money valuation, and Bloomberg described Claude Code as a key moneymaker. A source-code spill at that scale risks weakening Anthropic’s edge in the crowded market for AI coding agents just as the company pushes deeper into enterprise software and public-market ambition.

The episode created a legal and reputational wrinkle as well. Anthropic responded with copyright takedowns, which placed it on the defensive side of intellectual-property enforcement at a time when AI companies face intense copyright scrutiny over training data and model development. No leak-specific lawsuit has been reported yet, but the public optics were poor for a company that sells safety and operational maturity as core parts of its brand.

What Remains Unclear About the Leak

The exact internal failure path is still not public. Reporting has established that a source map escaped into a public package and that Anthropic blames process errors, but the company has not publicly detailed the precise build step, review breakdown, or publishing control that failed.

The full downstream spread remains unclear as well. Public reports cite more than 8,000 affected repositories in the broad initial takedown sweep and say Anthropic later narrowed that action, with the WSJ reporting the revised focus at 96 instances. Public reporting still does not pin down how many copies were downloaded before cleanup, how many developers reviewed the code offline, or which unlaunched features were genuine roadmap items rather than experiments.

Why This Incident Matters

This incident matters because it shows how a safety-focused AI company can suffer a plain software-release failure with major strategic consequences. Anthropic did not lose control through a sophisticated break-in. It appears to have lost control through routine software distribution, which is a harder lesson for the rest of the industry because it can happen inside normal product velocity.

The leak matters for cybersecurity professionals because it is a clean example of modern CI/CD and artifact-governance risk. A single packaging mistake exposed intellectual property, future features, and internal control logic to the public internet in hours. For AI companies racing to ship agentic tools, the story is not only about secrecy. It is about release hygiene, artifact review, repository control, and the operational discipline needed to keep sensitive code out of public channels.

How Bright Defense Can Reduce Release Leak Risk

Anthropic’s mishap shows how one release error can turn internal code into public intelligence overnight. Incidents like this are common across fast-moving software teams when publishing paths, cloud storage, and review gates change faster than security checks.

Bright Defense can help reduce that risk with penetration testing that examines exposed assets, internet-facing weaknesses, and release-adjacent attack paths, plus continuous cybersecurity compliance that keeps secure SDLC, access control, and change-management controls under constant review. That combination helps teams catch packaging mistakes, exposed storage, and broken approval gates before a public release. Talk to Bright Defense.

FAQ

The incident involved the accidental public exposure of about 500,000 lines of Claude Code source, which reporters described as giving outsiders a close view of the tool’s internal implementation and roadmap items.

Public reporting placed the disclosure on 31 Mar, 2026, with follow-on coverage published on 01 Apr, 2026.

Coverage described roughly 1,900 to nearly 2,000 files and around 500,000 to 512,000 lines of code.

Reporting attributed the exposure to a packaging or release process mistake where a debug or source-map related artifact made internal code accessible through a public distribution channel.

Anthropic’s statement to reporters said no sensitive customer data or credentials were involved, and coverage also distinguished the leaked Claude Code tooling from core model weights.

A broad, immediate reset is not implied by Anthropic’s “no credentials exposed” statement, and a sensible response focuses on targeted checks such as monitoring for unusual logins, reviewing API key usage, and rotating secrets that were already due for rotation.

Leaked source can increase risk when it reveals internal control logic and operational details, and reporting noted the exposure included harness or tooling code that could be valuable to competitors and could inform attacker tradecraft.

Coverage described rapid mirroring and cloning activity, with takedown activity affecting more than 8,000 copies at one stage and later narrowing in scope in at least one account.

Risk drops when you delete local copies, remove any derived artifacts from shared repos, and confirm your environment did not ingest unknown code into build pipelines, since the main issue centers on IP exposure and downstream reuse.

Teams reduce similar events with release hardening steps that treat build artifacts, debug files, and source maps as controlled outputs, plus deployment automation that reduces manual release mistakes, which matches the “human error during packaging” framing used in reporting.

Yes. Anthropic said a Claude Code release included some internal source code, and reporting put the leak at roughly 500,000 to 512,000 lines tied to Claude Code.

Yes, but the leaked code was tied to Claude Code, not evidence that Claude model weights were exposed. Anthropic also said no sensitive customer data or credentials were involved.

Usually yes when source code is copied, taken, or shared without authorization. In the U.S., computer programs are protected under copyright law, and federal trade-secret law can also criminalize unauthorized copying, downloading, uploading, or transmitting trade-secret information.

Sources

- Bloomberg – Anthropic Rushes to Limit Leak of Claude Code Source Code (April 1, 2026)

https://www.bloomberg.com/news/articles/2026-04-01/anthropic-scrambles-to-address-leak-of-claude-code-source-code - Bloomberg – Anthropic Executive Blames Claude Code Leak on ‘Process Errors’ (April 1, 2026)

https://www.bloomberg.com/news/articles/2026-04-01/anthropic-executive-blames-claude-code-leak-on-process-errors - The Wall Street Journal – Anthropic Races to Contain Leak of Code Behind Claude AI Agent (April 1, 2026)

https://www.wsj.com/tech/ai/anthropic-races-to-contain-leak-of-code-behind-claude-ai-agent-4bc5acc7 - The Guardian – Claude’s code: Anthropic leaks source code for AI software engineering tool (April 1, 2026)

https://www.theguardian.com/technology/2026/apr/01/anthropic-claudes-code-leaks-ai - Business Insider – Anthropic accidentally exposed part of Claude Code’s internal source code (April 1, 2026)

https://www.businessinsider.com/anthropic-leak-reveals-claude-code-internal-source-code-2026-3 - Business Insider – A 4 a.m. scramble turned Anthropic’s leak into a ‘workflow revelation’ (April 1, 2026)

https://www.businessinsider.com/claude-code-leak-what-happened-recreated-python-features-revealed-2026-4 - Axios – Anthropic leaked 500,000 lines of its own source code (March 31, 2026)

https://www.axios.com/2026/03/31/anthropic-leaked-source-code-ai - The Verge – Claude Code leak exposes a Tamagotchi-style ‘pet’ and an always-on agent (March 31, 2026)

https://www.theverge.com/ai-artificial-intelligence/904776/anthropic-claude-source-code-leak - Anthropic – Anthropic raises $30 billion in Series G funding at $380 billion post-money valuation (February 12, 2026)

https://www.anthropic.com/news/anthropic-raises-30-billion-series-g-funding-380-billion-post-money-valuation - Anthropic – Claude 3.7 Sonnet and Claude Code (February 24, 2025)

https://www.anthropic.com/news/claude-3-7-sonnet - GitHub – Releases: anthropics/claude-code (April 1, 2026)

https://github.com/anthropics/claude-code/releases - Reuters – Anthropic clinches $380 billion valuation after $30 billion funding round (February 12, 2026)

https://kelo.com/2026/02/12/anthropic-valued-at-380-billion-in-latest-funding-round/

Get In Touch