Updated:

May 7, 2026

150+ Deepfake Statistics (March 2026)

Deepfake fraud attempts have surged 2,137% in the last three years, and in 2024, a new deepfake attack was attempted every five minutes. The team at Bright Defense has compiled a comprehensive list of up-to-date 150+ valid deepfake statistics for 2025 and 2026. In this article, you’ll find hand-picked statistics about:

- Overall deepfake growth and projections

- Financial impacts and fraud losses

- Identity verification and biometric fraud

- Detection and accuracy challenges

- Targets, platforms, and political use

- Attack vectors and threat actor tactics

- Industry-level deepfake impacts

- Regional and country-level trends

- Major incidents and notable fraud cases

- Organizational readiness and human impact

- Elections and disinformation

- The deepfake detection and AI market

Without further ado, let’s check out the stats!

Overall Deepfake Growth and Projections

- Deepfake content on social media grew 550% between 2019 and 2023. (Deloitte)

- Deepfake fraud attempts increased 2,137% over the last three years, rising from 0.1% to 6.5% of all fraud attempts. (Signicat)

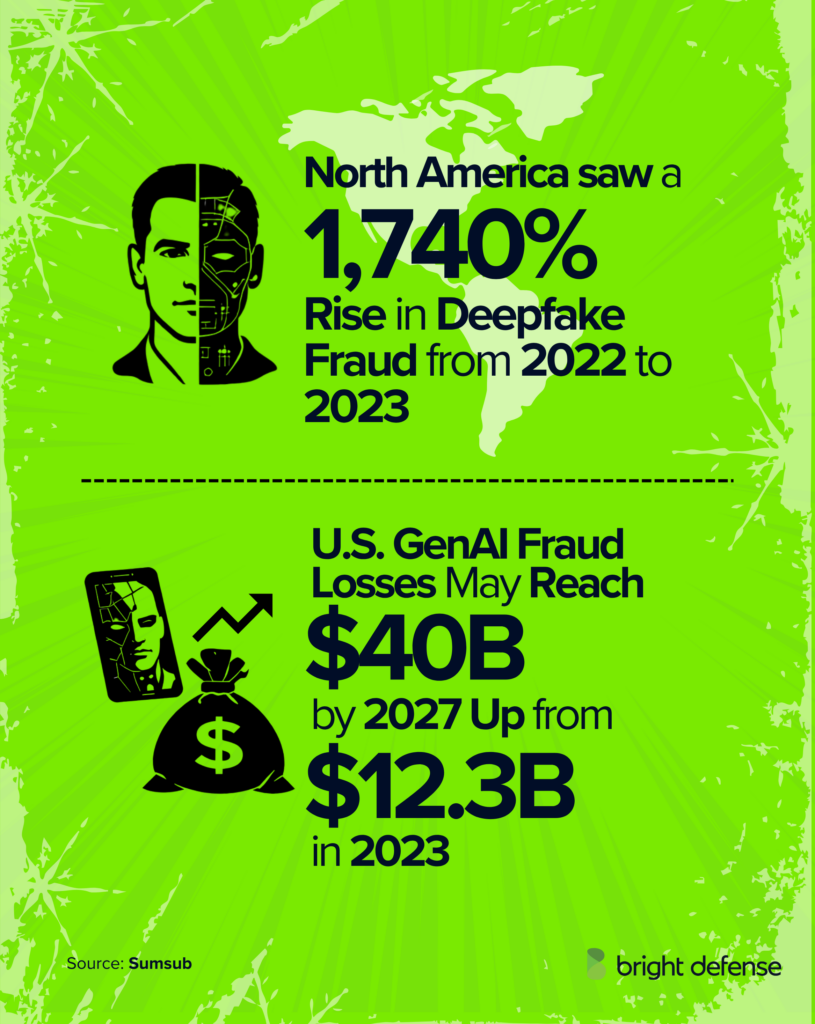

- Deepfake fraud incidents increased tenfold between 2022 and 2023, with a 1,740% surge in North America alone. (Sumsub)

- Voice deepfakes rose 680% year-over-year in 2024. (Pindrop)

- Deepfake fraud attempts rose by more than 1,300% in 2024, jumping from an average of one per month to seven per day. (Pindrop)

- A 26% increase in fraud attempts was recorded in 2024. (Pindrop)

- Synthetic voice fraud in insurance rose 475% in 2024, while banking experienced a 149% rise. (Pindrop)

- Deepfake fraud could rise 162% in 2025. (Pindrop)

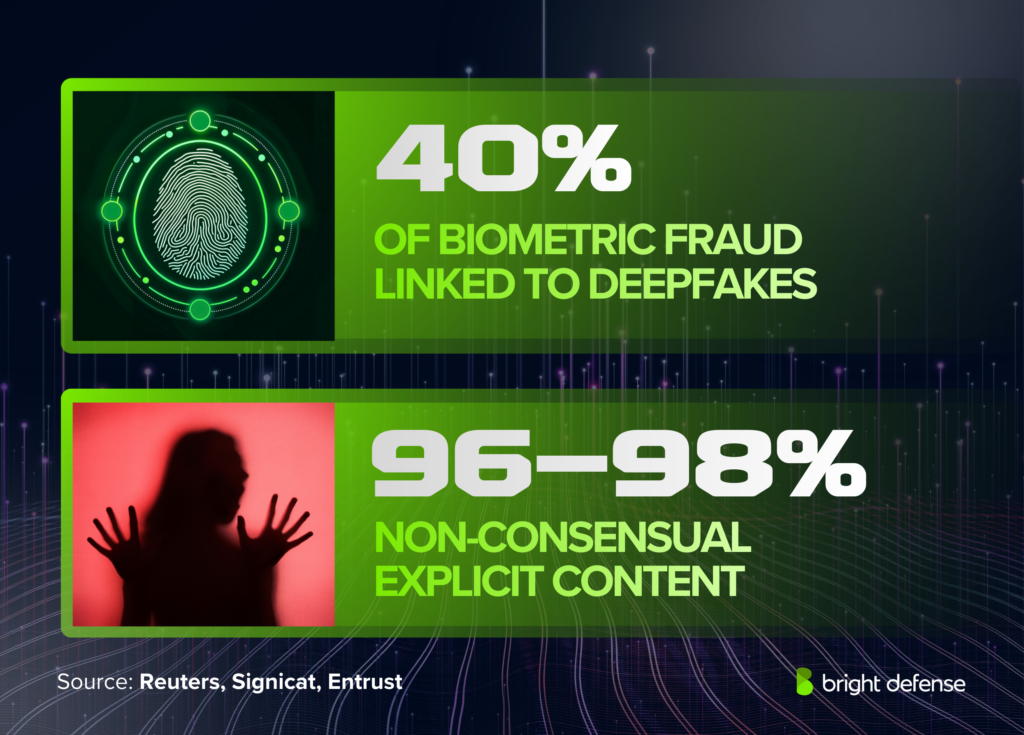

- Deepfakes now account for 40% of all biometric fraud. (Entrust)

- Digital document forgeries increased 244% year over year, representing a 1,600% surge since 2021. (Entrust)

- From 2017 to 2022, only 22 deepfake incidents were recorded. That number climbed to 42 in 2023, 150 in 2024, and 179 in Q1 2025 alone, surpassing all of 2024 by 19%.

- Resemble AI recorded 2,031 verified deepfake incidents in Q3 2025 alone. (Resemble AI)

- A 4x increase in the number of deepfakes detected worldwide from 2023 to 2024 accounted for 7% of all fraud attempts. (Sumsub)

- The global rate of identity fraud nearly doubled from 2021 to 2023. (Sumsub)

- Roughly 500,000 video and voice deepfakes were shared on social media globally in 2023, a figure estimated to have reached 8 million by 2025. (DeepMedia via Reuters)

- 49% of companies globally reported being targeted by audio or video deepfake fraud by 2024. (Regula)

- Credential phishing attacks increased by 703% in the second half of 2024, while phishing email messages rose 202% over the same period. (Keepnet)

- Deepfake phone calls are forecast to rise 155% in 2025. (Pindrop)

- Fraud attempts now occur every 46 seconds in contact centers. (Pindrop)

- Deepfake face swap attacks used for identity verification bypasses increased 704% in 2023. (Pindrop)

- 85% of organizations experienced one or more deepfake-related incidents in the past 12 months, a 10% increase year-over-year. (IRONSCALES)

- Over 40% of those organizations experienced three or more deepfake attacks. (IRONSCALES)

- 80% of deepfake content shared on Telegram channels is pornographic. (Keepnet)

- 96–98% of all deepfake content online is non-consensual explicit imagery, and 99–100% of victims are female. (Keepnet)

Deepfake Stats on Financial Impacts and Losses

- Generative AI fraud in the U.S. is expected to hit $40 billion by 2027, up from $12.3 billion in 2023, a compound annual growth rate of 32%. (Deloitte)

- In 2024, businesses lost an average of nearly $500,000 per deepfake-related incident. Some large enterprises experienced losses up to $680,000. (Keepnet)

- IRONSCALES’ 2025 report found mean losses exceeding $280,000 per deepfake incident. (IRONSCALES)

- 61% of organizations that lost money in a deepfake attack reported losses over $100,000. (IRONSCALES)

- Nearly 19% of those organizations reported losses of $500,000 or more, and over 5% lost $1 million or more. (IRONSCALES)

- About 55% of organizations reported financial losses from deepfake or AI-voice fraud in the past 12 months. (IRONSCALES)

- Contact center fraud could reach $44.5 billion in 2025. (Pindrop)

- The FBI counted 21,832 business email fraud cases in 2022, producing about $2.7 billion in losses. (Deloitte)

- Deloitte estimates GenAI email fraud losses could reach $11.5 billion by 2027 under an aggressive adoption scenario. (Deloitte)

- Financial losses from deepfake-enabled fraud exceeded $200 million during Q1 2025 in North America. (Resemble AI via Variety)

- 385 incidents in Resemble AI’s Q3 2025 data involved direct financial losses. (Resemble AI)

- 77% of victims targeted by a voice clone and who confirmed financial loss reported a monetary loss. More than half of those targeted lost money, and about one-third lost over $1,000. (McAfee)

- Deepfake incidents in fintech increased 700% in 2023. (Deloitte)

- The cryptocurrency sector saw deepfake-related incidents rise 654% from 2023 to 2024. Crypto platforms saw the highest rate of fraudulent activity attempts, rising 50% year-over-year from 6.4% in 2023 to 9.5% in 2024. (Sumsub)

- Dark web scamming software is sold from about $20 to thousands of dollars, lowering the barrier to entry for deepfake scams. (Deloitte)

- 88% of all deepfake cases detected in 2023 were concentrated in the crypto sector. (Sumsub)

- Global scam losses across all AI-enabled fraud reached $1 trillion in 2024. (Sift)

- 33% of consumers say someone attempted to scam them using AI, up from 29% the previous year, and 27% of those targeted were successfully defrauded, up from 19%. (Sift)

- 57% of crypto firms faced audio deepfake incidents in 2024, with average losses around $440,000. (Regula)

Deepfake Stats on Identity Verification and Biometric Fraud

- Deepfakes now account for 40% of all biometric fraud, up dramatically from the previous year. (Entrust)

- Deepfakes comprise 24% of fraudulent attempts to pass motion-based biometrics checks. (Entrust via Infosecurity Magazine)

- Cryptocurrency ranked as the most targeted industry, followed by lending and traditional banks. (Entrust)

- National ID cards were identified as the most vulnerable document type. (Entrust)

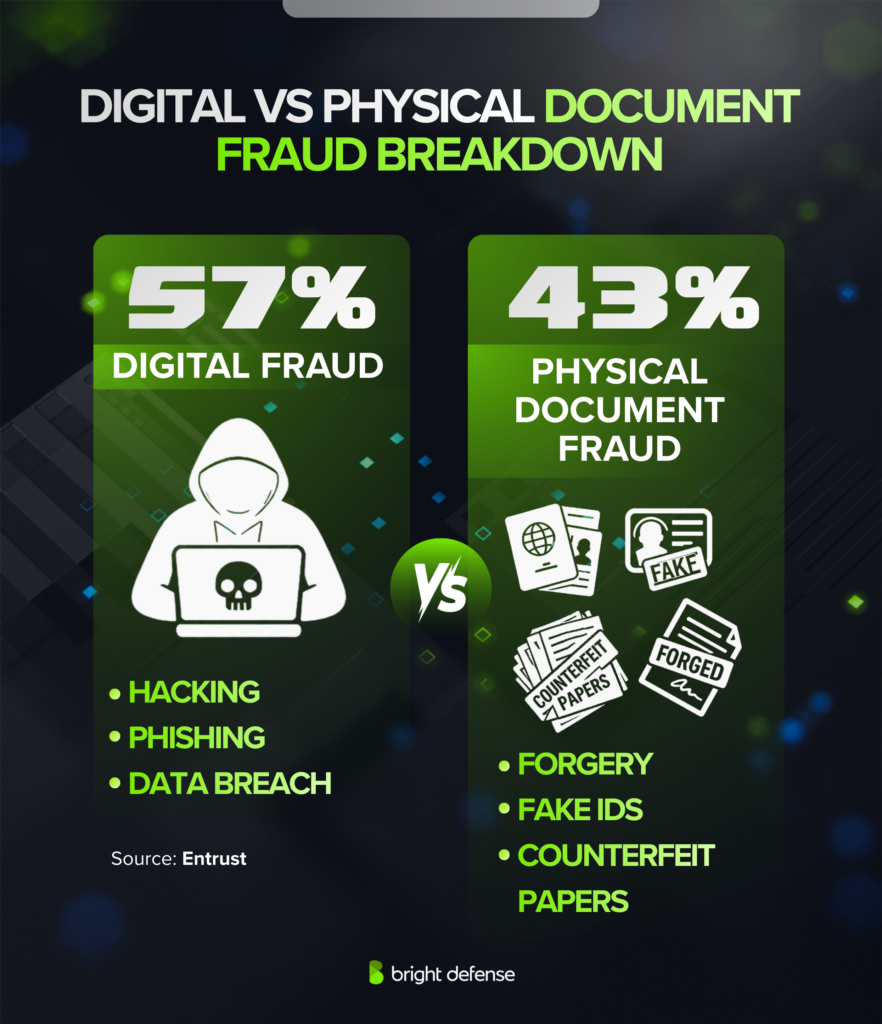

- Digital document forgery surpassed physical counterfeits for the first time in 2024, with digital forgeries accounting for 57% of all document fraud. (Entrust)

- 42.5% of fraud attempts detected in the financial sector are now AI-driven. (Signicat)

- Only 22% of financial institutions have implemented AI-based fraud prevention tools. (Signicat)

- Biometric authentication systems endure three times fewer fraudulent attempts than document-based systems. (Keepnet)

- Gartner predicts that by 2026, 30% of enterprises will no longer rely solely on identity verification to prevent fraud. (Keepnet)

Deepfake Detection and Accuracy Challenges

- Human detection rates for high-quality video deepfakes are just 24.5%. (Keepnet)

- A 2025 iProov study found that only 0.1% of participants correctly identified all fake and real media shown to them. (iProov)

- Participants were 36% less likely to correctly identify a synthetic video compared to a synthetic image. (iProov)

- A 2024 meta-analysis of 56 studies found that overall human deepfake detection accuracy averages 55.54% across modalities, barely above chance. (ScienceDirect)

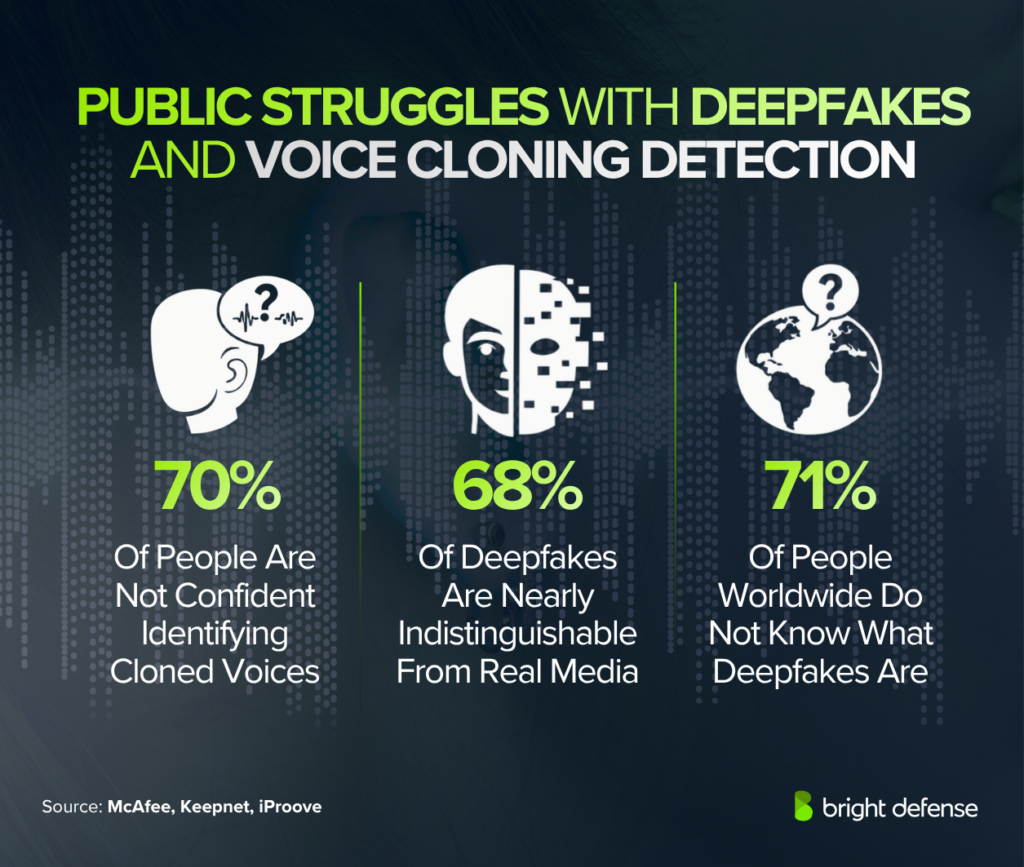

- 70% of people said they are not confident they can tell the difference between a real and cloned voice. (McAfee)

- 68% of deepfakes are now “nearly indistinguishable from genuine media.” (Keepnet)

- The effectiveness of defensive AI detection tools drops by 45–50% when used against real-world deepfakes outside controlled lab conditions. (World Economic Forum)

- Advanced multi-modal detection systems achieve 94–96% accuracy under controlled conditions, but widely available detection technology catches only about 65% of deepfakes. (World Economic Forum)

- Voice cloning now requires only 20–30 seconds of audio to produce a convincing replica of a target’s voice. (SoftwareSeni)

- A convincing 60-second deepfake video can be created in under 25 minutes at zero cost using freely available tools. (Security Hero)

- 84% of respondents familiar with generative AI said AI-generated content should always be clearly labeled. (Deloitte)

- 71% of people worldwide do not know what deepfakes are. (iProov)

- The deepfake detection market is expected to grow 42% annually, rising from $5.5 billion in 2023 to $15.7 billion by 2026. (Deloitte)

Attack Vectors and Threat Actor Tactics

- Email-based deepfake attacks and static image manipulation are tied as the most common threat vectors at 59.3% each. (IRONSCALES)

- Recorded audio/voice deepfakes rose from 25% of organizations encountering them in 2024 to 52% in 2025. (IRONSCALES)

- Recorded video deepfakes climbed from 33% in 2024 to 46% in 2025. (IRONSCALES)

- Live video manipulation increased from 30% to 41% year-over-year, with live voice deepfakes showing identical growth. (IRONSCALES)

- AI-generated phishing emails achieve 54% click-through compared with 12% for manually crafted phishing. (Keepnet)

- 82% of phishing emails are now generated by AI tools. (Sift)

- Open-source tool DeepFaceLab powers over 95% of deepfake videos. (Keepnet)

- Only 3 seconds of audio can generate an 85% accurate voice clone. (McAfee)

- 53% of people share their voice online at least weekly via YouTube, social media, or podcasts. (McAfee)

- Searches for “free voice cloning software” increased 120% between July 2023 and July 2024. (Keepnet)

- Fraudsters use 36% more payment methods than legitimate users and 82% more in digital commerce, while using 20% fewer IP addresses. (Sift)

- Peak deepfake fraud activity occurs during late-night hours between 10 p.m. and 5 a.m. local time. (Sift)

- Phishing reports increased 466%, AI-enabled scams rose 456%, and compromised personal data surged 186% in Q1 2025. (Sift)

- Blocked scam content increased 50% in Q1 2025 compared with Q1 2024. (Sift)

Deepfake Targets and Platform Distribution (Q3 2025)

- 48.7% of Q3 2025 deepfake incidents targeted celebrities and public figures. (Resemble AI)

- 48.3% targeted businesses. (Resemble AI)

- Women were targeted 4.5 times more often than men in individual-target deepfakes. (Resemble AI)

- 34.6% of targets were female, 7.7% male, and 50% were non-person targets. (Resemble AI)

- 331 incidents in Q3 2025 involved minors, representing 16.3% of all cases. (Resemble AI)

- Will Smith: 24 incidents. (Resemble AI)

- Barack Obama: 15 incidents. (Resemble AI)

- Donald Trump: 13 incidents. (Resemble AI)

- Marco Rubio: 10 incidents. (Resemble AI)

- Alexandria Ocasio-Cortez: 7 incidents. (Resemble AI)

- LeBron James: 6 incidents. (Resemble AI)

- Taylor Swift: 5 incidents. (Resemble AI)

- YouTube: 29.9% of tracked cases. (Resemble AI)

- Instagram: 26.8%. (Resemble AI)

- Facebook: 18.8%. (Resemble AI)

- TikTok: 18.3%. (Resemble AI)

- WhatsApp: 6.3%. (Resemble AI)

- 482 politically motivated deepfake incidents in Q3 2025, representing 23.7% of all cases. (Resemble AI)

Industry-Level Deepfake Impacts

- 88% of deepfake fraud cases occurred in cryptocurrency in 2023, with fintech accounting for 8%. (Sumsub)

- In 2024, crypto deepfake fraud attempts constituted 9.5% of identity fraud, lending and mortgage industries 5.4%, and traditional banks 5.3%. (Entrust)

- Sumsub’s Q1 2024 report recorded deepfake incidents increasing 1,520% in iGaming, 900% in online marketplaces, 533% in fintech, 217% in crypto, 138% in consulting, and 68% in online media. (Sumsub)

- 53% of finance professionals reported being targeted by deepfake scams, and 43% admitted falling for one. (Keepnet)

- 25.9% of executives experienced at least one deepfake incident. (Keepnet)

- Retail contact centers saw a 100% rise in deepfake fraud, with one fraudulent call now occurring every 127 calls, five times more frequent than in financial institutions. (Pindrop)

- In 2025, retail contact centers could see deepfake fraud calls increase to one every 56 calls. (Pindrop)

- 80% of professionals consider deepfakes a business risk, yet only 29% have implemented mitigation measures and 46% have no mitigation plan. (Eftsure)

- 92% of executives are concerned about risks from generative AI. (Eftsure)

Regional and Country-Level Deepfake Trends

- North America recorded a 1,740% increase in deepfake fraud between 2022 and 2023. (Sumsub)

- The Asia-Pacific region saw a 1,530% increase in deepfake fraud during the same period. (Sumsub)

- Sumsub’s Q1 2024 data showed deepfake incidents jumped 303% in the U.S. and 280% in India. (Sumsub)

- South Africa and Mexico each recorded 500% increases in deepfakes. (Sumsub)

- South Korea exhibited the highest deepfake fraud growth globally at 1,625%. (Sumsub)

- Bulgaria saw a 3,000% spike; Portugal 1,700%; Indonesia 1,550%; Turkey 1,533%; Singapore 1,100%; Hong Kong 1,000%; Moldova 900%; Brazil 822%; Belgium 800%. (Sumsub)

- Middle East deepfake incidents increased 643%, Africa 393%, and Latin America & the Caribbean 255%. (Sumsub)

- Some nations saw declines: the UK’s deepfake incidents fell 10%; Croatia 33%; Ireland 40%; Lithuania 44%. (Sumsub)

- GenAI-enabled fraud losses in the U.S. could rise from $12.3 billion in 2023 to $40 billion by 2027. (Deloitte)

- Financial losses from deepfake-enabled fraud exceeded $200 million during Q1 2025 in North America. (Resemble AI)

- 46 U.S. states have enacted deepfake legislation since 2022, with 146 bills introduced in 2025 alone.

- The federal TAKE IT DOWN Act became law in May 2025.

- 67.5% of U.S. consumers express concerns about deepfake and voice-clone threats in banking. (Pindrop)

- The UK experienced a 300% rise in deepfake cases from 2022 to 2023, rising to 780% across Europe. (Sumsub)

- In the first half of 2023, Britain lost £580 million to fraud, with £43.5 million stolen through impersonations using deepfakes.

- £6.9 million was lost to CEO impersonation fraud in the first half of 2023. (UK Finance)

- In 2019, a UK energy firm was defrauded of €220,000 via a deepfaked voice clone of its CEO. (Keepnet)

- UK organizations reporting AI-driven fraud attempts jumped from 23% in 2024 to 35% in early 2025. (Experian)

- More than 100 deepfake video ads impersonating PM Rishi Sunak appeared on Meta in a single month. (Fenimore Harper)

Deepfake Stats on Elections and Disinformation

- Between December 8, 2023 and January 8, 2024, researchers identified more than 100 deepfake video ads impersonating UK Prime Minister Rishi Sunak on Meta. (World Economic Forum)

- NewsGuard debunked false election claims pushed across more than 370 sites in 2024. (NewsGuard)

- NewsGuard identified approximately 9 new election-related false claims per week and cataloged 100 false claims circulating online between September 1 and November 18, 2024. (NewsGuard)

- A WEF contributor cited an automated disinformation project built with widely available AI tools for less than $400 per month. (World Economic Forum)

- In January 2024, thousands of New Hampshire voters received a robocall with a deepfaked voice resembling President Biden urging them not to vote. The free voice-cloning tool cost $1 and took under 20 minutes to produce. (Keepnet)

Organizational Readiness and Human Impact

- 88% of organizations have implemented deepfake-specific cybersecurity training, up from 68% in 2024. (IRONSCALES)

- Only 8.4% of organizations achieved simulation pass rates above 80% during deepfake exercises. The average first-try pass rate was 44%. (IRONSCALES)

- 74% of organizations recorded simulation pass rates below 60%. (IRONSCALES)

- 63% of respondents had not yet invested in deepfake defense, though 71% plan to make it a top priority within 12–18 months. (IRONSCALES)

- 80% of boards requested briefings on AI-generated deepfake risk within the past year. (IRONSCALES)

- 99% of respondents claim confidence in their deepfake defenses, yet over 63% described themselves as “very concerned” about the threats, a 15+ percentage point increase from the previous year. (IRONSCALES)

- 80% of companies lack formal protocols to respond to deepfake attacks. (Keepnet)

- More than 50% of business leaders admit employees have never received training on identifying deepfakes. (Keepnet)

- Only 5% of companies have a comprehensive deepfake prevention strategy. (Keepnet)

- 25% of company leaders have little to no understanding of deepfake technology. (Keepnet)

- 32% of leaders lack confidence in employees’ ability to detect or respond to deepfakes. (Keepnet)

- 72% of consumers are constantly worried about being deceived by deepfakes. (Keepnet)

- 47% of incident responders reported burnout, and 69% considered leaving their jobs. (VMware)

- 66% of cybersecurity professionals encountered deepfake incidents in 2022, a 13% increase from the prior year. (VMware)

Deepfake Detection and AI Market Stats

- The Generative AI market is projected to reach approximately $400 billion by 2031, with a CAGR of 37.57% between 2025 and 2031. (Statista)

- Analysts expect the deepfake detection market to grow 42% annually, rising from $5.5 billion in 2023 to $15.7 billion in 2026. (Deloitte)

- 46% of fraud experts have encountered synthetic identity fraud, 37% voice deepfakes, and 29% video deepfakes. (Statista)

- Mastercard’s fraud tooling scans 1 trillion data points per transaction to judge whether it is genuine. (Deloitte)

- Google’s SynthID has watermarked over 10 billion pieces of content with pixel-level signals designed to survive compression and editing. (Google)

- The deepfake AI market was valued at approximately $765 million in 2024 and is projected to grow to $19.8 billion by 2033. (Grand View Research)

- The global deepfake market overall is projected to reach $13.9 billion by 2032 with a 42.79% CAGR. (Keepnet)

Check Out More Stat Based Articles by Bright Defense:

- 100+ Compliance Statistics for 2026

- 80+ Zero Day Exploit Stats for 2026

- 120+ Penetration Testing Statistics

What Are Deepfakes?

Deepfakes are AI-generated images, videos, or audio that realistically imitate real people or events that never happened. They use deep learning models trained on large datasets to replicate faces, voices, and movements with high accuracy. This technology supports creative and commercial uses, while it can enable misinformation, fraud, and impersonation when misused.

The Billion-Dollar Impersonation: CEO Fraud and BEC

Corporate fraud through CEO impersonation, a high-value subset of whaling in cybersecurity, represents one of the most dangerous applications of deepfake technology. Attackers impersonate CEOs, CFOs, and other executives to trick finance workers into authorizing large wire transfers.

The results have been catastrophic for victims, and the number of deepfake-related incidents targeting company leaders continues to climb. CEO fraud now targets at least 400 companies per day.

Below are examples of costly deepfake frauds that made headlines:

1. $25M Deepfake Heist Fooled Arup Staff Fast

Breach Disclosed: 16 May, 2024

In January 2024, attackers executed a highly convincing deepfake scam against Arup, leading to 15 fraudulent transfers totaling $25.6 million in a single day. A finance employee in Hong Kong received a phishing email impersonating the CFO, followed by a video call where every participant was an AI-generated executive.

The employee authorized the transfers under perceived executive direction. The fraud surfaced during internal verification, prompting immediate escalation to authorities. Investigations remain ongoing, no arrests have been reported, and funds remain unrecovered as of early 2025. (PurpleSec)

Ferrari CEO Voice Clone Scam Nearly Cost Millions

Breach Disclosed: 26 Jul, 2024

In July 2024, Ferrari narrowly avoided a deepfake-enabled fraud attempt where attackers impersonated CEO Benedetto Vigna using AI-generated voice cloning during a WhatsApp call. The scam targeted a senior executive with urgent requests tied to a confidential acquisition and a potential financial transaction.

The attacker’s voice closely matched Vigna’s accent, increasing credibility. The attempt failed when the executive challenged the caller with a personal verification question, forcing the attacker to disconnect. No funds were transferred, and Ferrari launched an internal investigation immediately after the incident. (motor1.com)

AU$37M Deepfake Scam Revives €220K Warning

Breach Disclosed: 28 Aug, 2024

In the closest verified public timeline, Australian authorities highlighted in 2024 a business loss of AU$37 million, about US$25 million, from a single deepfake scam that used executive impersonation to trigger fraudulent transfers. The tactic mirrors the early corporate warning from 2019, when a UK energy firm sent €220,000 after a cloned senior voice demanded an urgent payment. Together, the cases show how synthetic fraud exploits urgency, authority, and weak payment verification inside global companies. (Australian Federal Police)

Source: Australian Federal Police

Elon Musk Deepfake Scam Exposed New Fraud Risk

Breach Disclosed: 28 Jun, 2024In2022, a deepfake video of Elon Musk spread online to push a crypto scam promising 30% daily returns, showing how synthetic media can turn celebrity trust into financial bait. Business.com reported on 28 Jun, 2024 that more than 10% of companies had already faced attempted or successful deepfake fraud, while losses from successful incidents reached as high as 10% of annual profits. The pattern is clear: deepfakes now threaten both consumers and corporate payment controls at meaningful scale. Source:Business.com

Weaponized Voices: The Surge in Voice Cloning Scams

Voice cloning is the leading attack vector in deepfake fraud, requiring as little as 3 seconds of audio to generate a voice with up to85% similarity, which enables highly convincing impersonation in urgent scam scenarios. Voice deepfakes increased 680% year-over-year in 2024 based on analysis of over 1.2 billion calls, while studies show humans struggle to distinguish synthetic voices from real ones.

Consumer concern is rising, with 67.5% of U.S. consumers reporting anxiety about deepfake threats in financial services. Exposure is widespread, as 1 in 10 people report receiving cloned voicemails, and 77% of victims lost money, while40% would still respond to a request from a familiar voice. Effective defense requires moving beyond legacy authentication toward multifactor verification, real-time liveness detection, and risk-based controls to secure voice interactions.

Why Humans Are Vulnerable To Deepfakes

Human detection of high-quality deepfakes is extremely low, with only 24.5% accuracy and just 0.1% of people correctly identifying all media in a 2025 iProov study, while broader research places average detection near chance. Repeated exposure to synthetic content increases perceived credibility, reinforcing the illusory truth effect and raising belief in misinformation even among informed users.

The “liar’s dividend” further breaks trust, where real evidence can be dismissed and fake media accepted, which means human judgment alone cannot validate digital content. Effective defense requires AI-based detection and structured verification processes rather than relying on intuition.

The Social Crisis Of Non-Consensual Intimate Imagery (NCII)

Deepfake abuse is driving a social crisis centered on non-consensual intimate imagery, with women disproportionately targeted and institutions struggling to respond. Students use deepfake tools to create explicit content of peers and teachers, while women face targeting rates 4.5× higher than men. Data shows 96–98% of deepfake content online is explicit, with 99–100% of victims being female, and 80% of Telegram-shared deepfake content classified as pornographic.

The impact extends into healthcare, where fabricated videos of doctors promote scams and undermine trust in clinical evidence. Public concern continues to rise as generative tools reduce the barrier to creating harmful content, prompting regulatory action such as laws enacted across 46 U.S. states by 2025, the TAKE IT DOWN Act in May 2025, and EU AI Act transparency rules set for August 2026.

Real-World Impacts Of Deepfake Phishing

Deepfake phishing fundamentally alters how organizations evaluate trust, communication, and security, introducing deception that consistently bypasses human judgment.

Voice cloning allows attackers to mimic trusted individuals using seconds of audio, which creates urgent scenarios that drive financial loss, while research shows people frequently rate synthetic voices as indistinguishable from real ones.

The liar’s dividend amplifies this risk, as authentic evidence can be dismissed as fake while fabricated content can trigger real consequences such as market disruption or reputational damage.

Many organizations rely on technical fixes, yet this approach fails to address a deeper breakdown in how information is verified. Resilient organizations embed verification into core workflows and treat trust as a system-level control rather than a human assumption.

Deepfake Phishing Trends to Watch in 2026

Deepfake phishing will intensify in 2026 as attackers combine real-time media manipulation, automation, and scalable fraud services to bypass traditional controls.

The following trends define where organizations face the highest risk:

1. Cross-Channel Attack Campaigns

Attackers are coordinating deepfake video, voice cloning, and social engineering across multiple platforms in a single operation. Campaigns often move from messaging apps to video calls and email, which increases credibility and reduces the chance of detection.

2. Real-Time Deepfakes In Video Calls

Attackers are using live video deepfakes during meetings to impersonate executives and authorize fraudulent transactions. Resemble AI reported 980 corporate infiltration cases in Q3 2025, which goes to show how real-time manipulation during Zoom calls can bypass standard verification controls.

3. Deepfake Job Candidates

Fraudulent applicants are using AI-generated voices and synthetic video to pass remote interviews and gain access to internal systems. Pindrop found that 1 in 4 North Korean IT job applicants use deepfakes to conceal their identity, which highlights a growing insider threat vector. Security teams evaluating this exposure can review our guide to the risks and mitigation of insider threats for control recommendations.

4. Agentic AI Fraud

Autonomous AI systems are executing multi-step fraud schemes without constant human input. These systems can create synthetic identities, interact with targets, and adapt tactics in real time, enabling attacks such as fake employees passing interviews or scams targeting financial assets.

5. Fraud-As-A-Service Platforms

Deepfake tools are now widely available through dark web marketplaces and spoofing-as-a-service platforms. This access removes the need for advanced technical skills, allowing more threat actors to conduct sophisticated impersonation attacks at scale.

Why Are Deepfake Phishing Attacks Increasing?

Three primary drivers are accelerating the growth of deepfake attacks.

1. Collapsing barriers to entry. The tools required to create convincing deepfakes are widely available, inexpensive, and require minimal technical expertise. Dark web marketplaces sell scamming software from $20 to thousands of dollars. A WEF contributor documented an automated disinformation campaign built for less than $400 per month. A convincing 60-second deepfake video can be produced in under 25 minutes at zero cost using freely available tools.

2. Explosive growth of generative AI. The generative AI market is projected to grow to approximately $400 billion by 2031. Every advancement in AI capabilities that benefits legitimate users simultaneously empowers attackers with more realistic creation tools.

3. Human vulnerability at scale. Humans detect high-quality video deepfakes at a rate of just 24.5%. Social media platforms amplify manipulated content through algorithmic distribution, and the illusory truth effect makes repeatedly viewed content seem more credible. Training alone cannot close this gap. 82% of phishing emails are now generated by AI tools, making deepfake scams faster and more convincing than ever.

How To Protect Your Organization Against Deepfake Phishing

Protecting an organization against deepfake phishing requires layered controls that combine verification processes, technical detection, and employee discipline. The following measures reduce the risk of successful impersonation attacks:

- Establish Code-Word Systems: Use pre-shared code words or private verification questions for identity confirmation during calls. This control adds a simple but effective layer that attackers cannot easily replicate.

- Mandate Multi-Channel Verification: Require confirmation for sensitive actions through a separate, trusted channel such as a known phone number or secure internal system. Single-channel approval creates exposure, while independent verification interrupts impersonation attempts.

- Deploy AI-Powered Detection: Use detection tools that analyze audio, video, and documents in real time. Integrate these systems into identity verification workflows, video conferencing platforms, and contact center operations to identify synthetic media during interactions.

- Implement Strict Financial Controls: Require multiple independent approvals for high-value transactions. No employee should authorize payments based solely on a video call or email instruction, regardless of the requester’s apparent authority.

- Redesign Verification Protocols: Replace knowledge-based authentication with behavioral biometrics, liveness detection, and signal-based verification methods. These approaches identify inconsistencies that indicate manipulated or synthetic content.

- Build Deepfake Awareness Training: Extend security awareness training to include indicators such as facial distortion, lip-sync mismatch, and unnatural audio patterns. Training must reinforce process adherence over intuition since the average deepfake simulation pass rate is just 44%, which goes to show how often employees trust convincing forgeries.

Why Bright Defense Delivers Quality Cybersecurity Compliance Services

Bright Defense delivers quality cybersecurity compliance services through data-driven expertise, real-world threat alignment, and structured implementation. The team builds compliance programs around current risks, where deepfake fraud attempts have surged 2,137% in three years and now occur every five minutes, which goes to show compliance must reflect active threats rather than static controls.

Each engagement connects controls to real attack paths such as identity fraud and phishing, while reinforcing processes and employee readiness. This approach addresses common gaps, where 80% of organizations lack response protocols and only 5% maintain mature prevention strategies , resulting in compliance programs that perform in real-world conditions.

Frequently Asked Questions About Deepfake Statistics

How fast are deepfake attacks growing?

Deepfake fraud attempts increased 2,137% over the last three years according to Signicat. In 2024, deepfake attacks occurred at a rate of one every five minutes, according to Entrust. Resemble AI recorded 2,031 verified incidents in Q3 2025 alone. 85% of organizations experienced at least one deepfake incident in the past year.

How much money has been lost to deepfake fraud?

Businesses lost an average of nearly $500,000 per deepfake-related incident in 2024, with some large enterprises losing up to $680,000. IRONSCALES found mean losses exceeding $280,000 per incident. Deloitte projects that generative AI-enabled fraud losses in the U.S. could reach $40 billion by 2027. Global scam losses across all AI-enabled fraud reached $1 trillion in 2024.

Which industries are most targeted by deepfake fraud?

The crypto sector faces the highest rate of deepfake-related fraudulent activity, followed by lending and traditional banks according to Entrust. 42.5% of fraud attempts in the financial sector are now AI-driven, according to Signicat. iGaming saw a 1,520% spike in deepfake incidents.

Can humans detect deepfakes?

Human detection rates for high-quality video deepfakes are just 24.5%. A 2025 iProov study found only 0.1% of participants correctly identified all fake and real media. A meta-analysis of 56 studies found average detection accuracy of just 55.54%, barely above chance. 70% of people say they are not confident they can distinguish a real voice from a cloned one.

What was the largest deepfake fraud case?

The largest publicly known case involved a finance worker at Arup who was tricked into transferring $25 million after attending a video call where every participant was a deepfake recreation of colleagues and executives. An Australian company lost a comparable amount (AU$37 million) in a separate deepfake attack.

How are deepfakes used in elections?

Researchers identified over 100 deepfake video ads impersonating UK PM Rishi Sunak on Meta in a single month. NewsGuard documented false election claims across more than 370 websites in 2024. Resemble AI recorded 482 politically motivated deepfake incidents in Q3 2025. In January 2024, a deepfaked voice resembling President Biden was used in a robocall urging New Hampshire voters not to vote.

Where do deepfakes spread most on social media?

YouTube accounts for 29.9% of tracked deepfake cases, followed by Instagram at 26.8%, Facebook at 18.8%, TikTok at 18.3%, and WhatsApp at 6.3% according to Resemble AI’s Q3 2025 report.

How much does it cost to create a deepfake?

Dark web scamming software is available from as little as $20. A convincing 60-second deepfake video can be produced in under 25 minutes at zero cost. The Biden robocall deepfake cost $1 and took under 20 minutes to produce.

How big is the deepfake detection market?

The deepfake detection market is expected to grow 42% annually, rising from $5.5 billion in 2023 to $15.7 billion by 2026. The deepfake AI market was valued at $765 million in 2024 and is projected to reach $19.8 billion by 2033.

Are organizations prepared for deepfake attacks?

Not even close. 63% of organizations have not invested any budget into deepfake defense. 80% lack formal protocols to respond to deepfake attacks. Only 5% have a comprehensive deepfake prevention strategy. The average deepfake simulation pass rate is just 44%.

What is the best way to verify someone’s identity on a video call?

Ask the person to perform an action that can cause a real-time deepfake to glitch, such as turning their head sharply to the side. Better yet, require a personal verification question that only the real person could answer, as the Ferrari executive did. Organizations should combine these live challenges with AI-powered liveness detection for maximum protection.

The Path Forward: Building Resilience in the Synthetic Age

Deepfake threats are accelerating faster than current defenses, with fraud attempts rising 2,137% in three years, projected U.S. losses reaching $40 billion by 2027, and human detection accuracy falling to 24.5% for advanced fakes. Organizations face immediate risk and must respond with AI-driven detection, strict verification processes, and ongoing awareness training that reflects human limitations.

Fragmented efforts across sectors weaken overall defense, which goes to show coordinated action is necessary. Long-term resilience depends on redesigning systems with the assumption that any digital content can be fabricated, embedding verification into critical workflows, and prioritizing layered detection over trust.

Get In Touch