Updated:

May 8, 2026

Why SOC 2 is Critical for Your AI Startup

Building an AI or SaaS startup is a high-stakes challenge. Investors, partners, and customers want to know they can trust you with their data from day one. In a world where AI systems process massive amounts of sensitive information, a single security misstep can damage credibility and stall growth.

In fact, 65% of consumers say they would stop doing business with a company after a single data breach. SOC 2 compliance signals that your startup takes security, availability, and data integrity seriously. It shows you have the internal controls in place to protect information and operate with transparency, which are key factors that help you win trust in a competitive market.

In this article, you’ll learn why SOC 2 matters for AI startups, how it impacts customer trust and business growth, and the key steps to prepare for compliance. By the end, you’ll know exactly how SOC 2 can become a competitive advantage for your business.

What Is SOC 2 and Why Does It Matter for AI Stratups?

SOC 2 is an auditing standard developed by the American Institute of Certified Public Accountants (AICPA) that evaluates how a company manages customer data. It focuses on five Trust Service Criteria: security, availability, processing integrity, confidentiality, and privacy.

A SOC 2 report provides independent validation that an organization has the necessary policies, procedures, and technical safeguards to protect data.

SOC 2 compliance is not legally required for AI startups, but it is often expected, especially by users, partners and enterprise clients

SOC 2 compliance is not legally required for AI startups, but it is often expected, especially by users, partners, and enterprise clients. AI companies handle confidential data such as personal identifiers, financial records, and proprietary business information. So it’s important to only seek AI consulting from AI development companies that have experience handling confidential data such as personal identifiers, financial records, and proprietary business information.

Without a recognized standard like SOC 2, convincing customers and investors that data is managed securely becomes difficult.

Meeting SOC 2 requirements demonstrates a strong security compliance posture, supports risk assessments for potential vulnerabilities, and helps avoid manual compliance tasks that slow growth.

Does SOC 2 Cover AI?

SOC 2 does not contain AI-specific requirements, but it applies to AI companies in the same way it applies to any technology provider that handles customer data.

SOC 2 does not contain AI-specific requirements, but it applies to AI companies in the same way it applies to any technology provider that handles customer data.

The framework focuses on how an organization protects data under the five Trust Service Criteria: security, availability, processing integrity, confidentiality, and privacy, regardless of whether that data is processed through traditional software systems or AI models.

Maintaining security protocols and conducting periodic gap analysis can help AI companies address potential weaknesses while meeting SOC 2 expectations.

Benefits of SOC 2 for AI Companies

SOC 2 compliance delivers tangible benefits for AI companies that manage sensitive data and aim to win the trust of customers, partners, and investors.

The following points highlight how meeting this standard can strengthen your security posture and create new business opportunities:

1. Strengthens Customer Trust

SOC 2 certification offers independent confirmation that your security practices meet industry standards for protecting sensitive information. This is critical for AI companies that process personal identifiers, financial data, or proprietary business records. Strong security controls also demonstrate that your company can consistently protect assets across multiple environments.

According to a study, 83% of consumers say they are more likely to do business with companies they believe protect their data well. SOC 2 gives you a clear way to demonstrate that commitment, turning security into a competitive advantage.

2. Meets Enterprise Procurement Requirements

Large enterprises often make SOC 2 a non-negotiable part of their vendor evaluation process. Without it, your company may be automatically excluded from RFPs or partnership discussions. Many also expect vendors to perform readiness assessments before the audit stage, which can shorten timelines and improve buyer confidence.

A study reports that 80 % of consumers are more likely to purchase from companies they believe protect their personal information. Achieving SOC 2 supports that trust while also meeting procurement requirements tied to vendor security, positioning your business for stronger customer relationships and expanded contract opportunities.

3. Demonstrates Security Maturity

SOC 2 compliance proves that your company has well-documented policies, defined processes, and active security monitoring in place.

This maturity is highly valued by investors and partners who want assurance that your operations can scale securely. Conducting risk assessments regularly strengthens the ability to address emerging threats.

4. Reduces Risk of Data Breaches

SOC 2 controls target core areas such as access management, encryption, monitoring, and incident response. These measures reduce vulnerabilities that cybercriminals exploit.

IBM’s 2024 Cost of a Data Breach Report found the average global cost of a breach is now $4.45 million, with even higher costs for technology companies.

For high-growth AI firms, critical infrastructure protection is vital to maintaining uptime and service reliability.

5. Supports Regulatory Readiness

While SOC 2 is not a legal requirement, its principles align closely with major data protection laws such as the GDPR and CCPA.

Adopting SOC 2 practices now can make future compliance efforts more efficient and lower the chance of penalties for non-compliance. A well-structured compliance process in place today can help prevent expensive remediation work later.

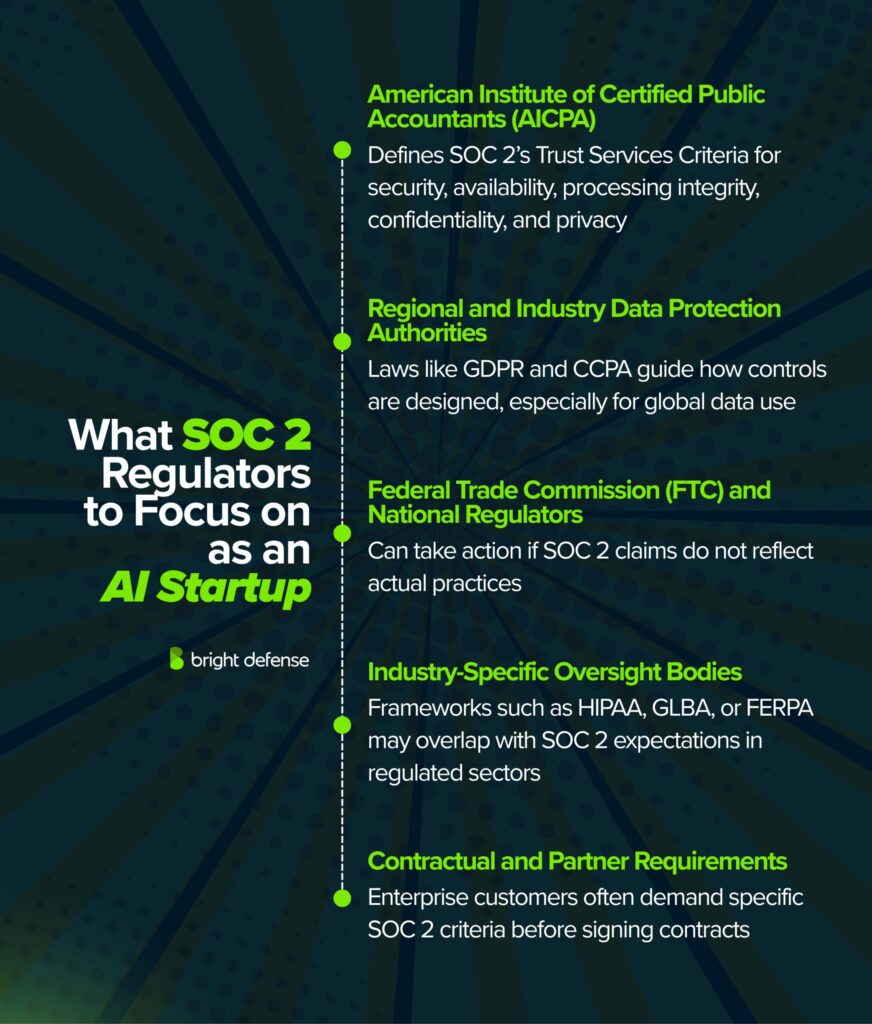

What SOC 2 Regulators to Focus on as an AI Startup?

SOC 2 is not enforced by a government body. It is maintained by the American Institute of Certified Public Accountants (AICPA). When you pursue SOC 2 compliance, you work with independent auditors who follow the AICPA’s Trust Services Criteria and assess your compliance processes in detail.

These auditors review your policies, controls, and evidence collection methods to see if you meet the standard. Clear documentation and, when possible, the use of compliance automation can make these reviews faster.

While the AICPA sets the framework, AI startups also need to know about the regulatory and industry bodies that influence data handling practices alongside SOC 2 requirements.

1. American Institute of Certified Public Accountants (AICPA)

This is the governing authority for SOC 2. They define the Trust Service Criteria covering security, availability, processing integrity, confidentiality, and privacy. They also provide the official guidance auditors follow. All SOC 2 compliance work ultimately stays in line with AICPA standards, especially for any service organization aiming to show it follows reliable practices.

2. Regional and Industry Data Protection Authorities

SOC 2 works closely with privacy and data security requirements from laws like the EU’s General Data Protection Regulation (GDPR) and California’s Consumer Privacy Act (CCPA). These laws are not part of SOC 2 but they affect how your controls are built. For AI startups that operate with global data, reviewing these regulations early will help avoid costly rework and define the audit scope for SOC 2.

3. Federal Trade Commission (FTC) and Other National Regulators

In the United States, the FTC enforces data privacy and security rules, especially when businesses misrepresent their security posture. If your AI startup claims SOC 2 compliance but does not meet the actual requirements, regulators can take action. Preparing for audits regularly helps avoid discrepancies that could trigger enforcement.

4. Industry-Specific Oversight Bodies

If your AI product serves regulated sectors such as healthcare, finance, or education, extra frameworks like HIPAA for health data, GLBA for financial institutions, or FERPA for student records may overlap with SOC 2. Organizations in these sectors often require a control environment with robust security controls that combine SOC 2 with industry-specific safeguards.

5. Contractual and Partner Requirements

Large enterprise customers often have their own compliance teams that review SOC 2 reports. Their procurement teams can act as a regulator by demanding certain SOC 2 criteria before doing business. Meeting these requirements early can make contracting easier and shorten sales cycles.

For AI startups, the main goal is to design SOC 2 controls that meet the AICPA’s framework while also satisfying regional laws, industry-specific rules, and enterprise procurement standards.

Can AI Replace a SOC Analyst?

AI can handle parts of a SOC analyst’s job such as log analysis, anomaly detection, and basic incident triage. It processes large amounts of data quickly and flags threats in real time, which cuts down on manual work.

AI cannot fully replace a SOC analyst. Human analysts handle complex attacks, understand business context, and make judgment calls that AI cannot match.

AI cannot fully replace a SOC analyst. Human analysts handle complex attacks, understand business context, and make judgment calls that AI cannot match. A 2024 SANS Institute survey found that 76 percent of security leaders view AI as a support tool, not a replacement for human SOC teams.

The best approach combines AI for speed and scale with analysts for decision-making, strategic response, and protection of the organization’s most critical assets.

Is ChatGPT SOC 2 Compliant?

Consumer versions of ChatGPT such as the free and Plus tiers are not listed under this SOC 2 certification. However, OpenAI’s enterprise focused products ChatGPT Enterprise, ChatGPT Team, ChatGPT Edu, and the API Platform have completed a SOC 2 Type II audit covering security and confidentiality controls. This SOC 2 audit process evaluates the organization’s adherence to established standards before granting certification.

Consumer ChatGPT (free and Plus) is not covered under SOC 2. ChatGPT Enterprise, Team, Edu, and the API Platform have SOC 2 Type II certification for security and confidentiality.

The SOC 2 reports are available through the OpenAI Trust Portal where organizations can request access to review the audit results, helping prospective customers and potential clients confirm the company’s security posture.

How Bright Defense Can Help AI Startups with SOC 2?

SOC 2 compliance can feel overwhelming for a fast-moving AI startup, but it is also one of the strongest trust signals you can give to customers, investors, and partners.

At Bright Defense, we guide AI companies through the process with a focus on both security and business growth.

Our experience with SOC 2 and deep understanding of AI data challenges means we can help you put the right controls in place without slowing innovation.

With the right guidance, compliance becomes more than a checkbox, it becomes a competitive edge that helps you win enterprise opportunities and build lasting trust.

FAQs

SOC 2 is widely used in vendor risk reviews, so enterprise buyers often ask for it when your product processes their data or supports their operations, especially during procurement and security questionnaires.

Security is always central, and confidentiality and privacy often become the deciding factors for AI systems that ingest prompts, customer documents, conversation logs, or user identifiers, since the Trust Services Criteria explicitly cover those areas.

No. SOC 2 is focused on controls around the systems that provide your service and how those systems protect information and operate, not whether model outputs are correct or fair.

AI tool use inside workplaces can create data exposure paths when accounts are outside company identity systems, and Verizon reports regular GenAI access on corporate devices with many accounts tied to non-corporate emails and others lacking integrated authentication.

Yes. IBM’s Cost of a Data Breach Report 2025 highlights that among organizations reporting an AI-related security incident, 97% lacked proper AI access controls and 63% lacked AI governance policies, which makes control design and evidence important for AI startups selling trust.

A SOC 2 Type I report can be a first step when timing is tight because it evaluates control design at a point in time, while Type II also tests operating effectiveness over a period of time.

Yes. SOC reports are used to assess risks tied to outsourced services, and buyers commonly expect you to show how you manage vendor access, data handling, and dependency risk inside your own control environment.

A practical first week outcome is a clear system scope and data flow map for prompts, files, logs, and training data sources, plus an initial risk view that follows a recognized AI risk approach such as NIST AI RMF’s GOVERN, MAP, MEASURE, and MANAGE structure.

SOC 2 compliance for AI means the AI product or AI-enabled service is in scope for a SOC 2 examination, and the organization’s controls are assessed against the Trust Services Criteria that apply, such as Security, Availability, Processing Integrity, Confidentiality, and Privacy.

ISO/IEC 27001 is not inherently better than SOC 2 because ISO/IEC 27001 is an ISMS requirements standard used for certification, while SOC 2 is an AICPA examination report on controls tied to the Trust Services Criteria for a defined system and scope.

Supply chain security is critical in AI because AI systems often depend on third-party data, models, software, and infrastructure, and those dependencies can introduce risk through the components themselves and through how they are integrated and operated. NIST flags third-party software, hardware, and data as a major complication for AI risk measurement and management, and WEF survey data shows large organizations cite third-party and supply chain vulnerabilities as a top resilience challenge.

AI is unlikely to fully take over a Security Operations Center because it can automate parts of alert triage, correlation, and detection, while accountability, incident decision-making, and business-context judgments still sit with people. WEF’s 2026 findings support a major shift toward AI in cybersecurity work, while also framing AI-related risk growth as a leading concern that needs governance and oversight.

Get In Touch